What happens when Filter Bubbles start producing effects in the physical space of the city? Separation. This is the concept of the Constrained Cities. Launched for the first time at the Post Internet Cities conference in Lisbon.

For the Post Internet Cities conference we presented this article: Constrained Cities: the publication

[Constrained Cities, here on E-Flux]

It is a peculiar article: it is a story, a love story, and it has a sad ending.

Each part of the story is backed up by sound research evidence. It is a carefully designed scientific fiction which is actually possible and supported by research. It is a story which would become possible (or even common) in the Constrained Cities.

But what are the Constrained Cities?

Let’s make a little experiment to understand the concept.

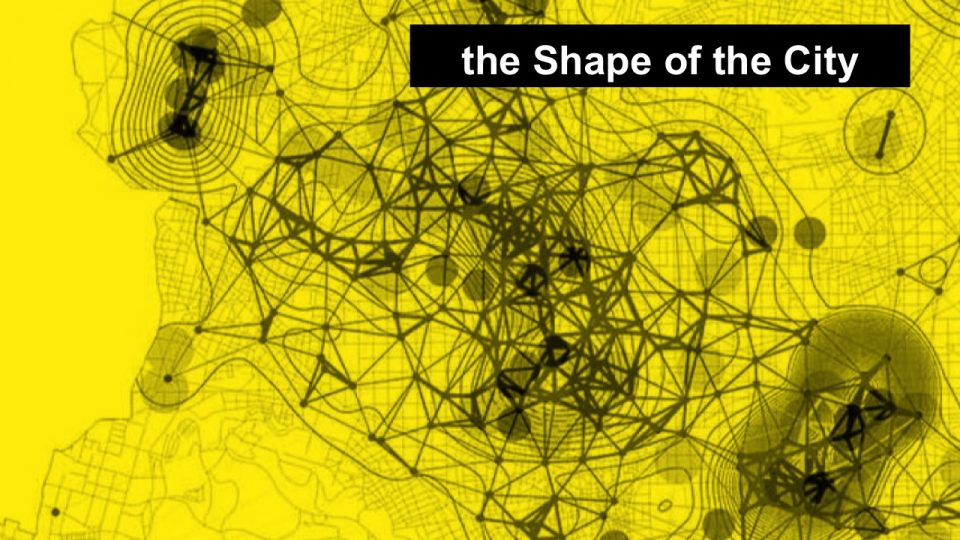

Let’s take a city (in the next images it’s Bologna). Let’s find a way in which to check if and how different people get different perception of the city by doing geographically relevant queries on search engines (on Google, TripAdvisor, Yelp, Facebook).

For this, let’s design a set of 10,000 (ten thousand) queries to look for restaurants, swimming pools, a nice cinema in which to see a certain movie, where to buy certain products, etc.

Let’s use a software to:

- have people login to their accounts on these services (Google, TripAdvisor, Yelp, Facebook)

- take the queries one by one and execute them

- store the results, in the form: the answer to query X was result Y at (lat,lon) coordinates

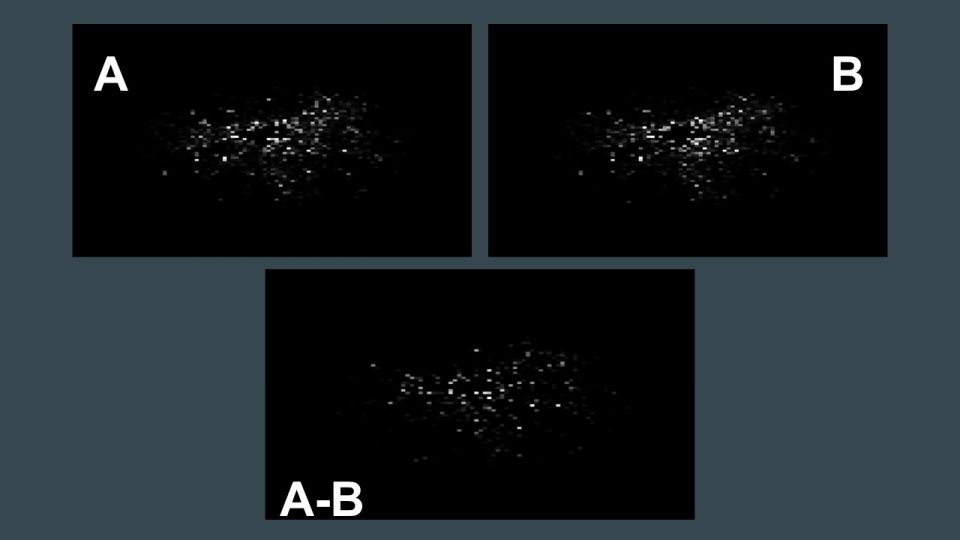

Let’s plot the results for two candidates, A and B (random friends at Art is Open Source).

In the image you see first the results for A, then the results for B and then their differences. In the images: each dot is a geographical coordinate in Bologna (we have removed the geo cartography from under the results to focus on the dots); a white dot means that the queries returned at least one result in that place; the whiter the dots, the more results in that place.

The difference is calculated subtracting dots B from dots A, which means that if at a certain geographical point, A has a value of 20 and B has a value of 5, the resulting in the A-B image will be 15 (and, thus, a certain degree of whiteness).

As you can see there is a strong difference. About 22% actually, for the city of Bologna.

What does this difference mean?

Let’s think about it.

It means that if I was A, and I wanted to ask where to eat Japanese food in Bologna, starting from a specific location in the city, I would have 22% probability to receive indications for a restaurant which is different from the one B would have received, if he or she was in the same situation.

Same equals Different.

Same place, same question, different people = different answers.

Why?

source: screenshot from “charting culture”, Nature, https://www.youtube.com/watch?v=4gIhRkCcD4U

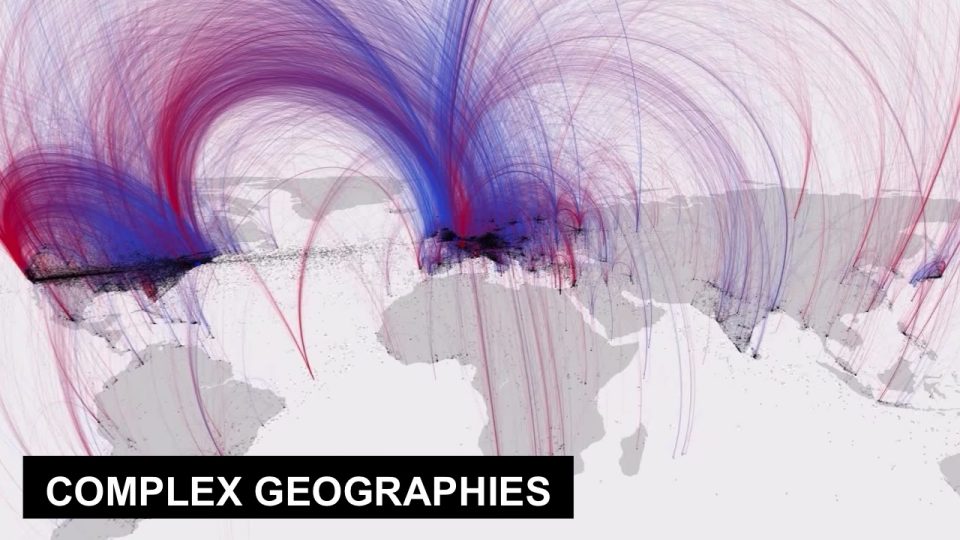

Complex geographies

When we search for something on the web or mobile web, what we get is a complex geography of results: a financial, psychological, cultural, behavioral, political, algorithmic machine learned and, oh, also actual geography because something is actually there.

Some indications we receive because they are ads, or because they paid the premium service. Some we receive because they know that we like Japan from the books and purchases on Amazon. Some we receive because one of our friends which we chat with a lot checked in to that place and gave it 4 stars out of 5. Some we don’t receive because their server was associated to SPAM messages, even if it’s the best resto in the city. Etc.

source: Visualizing cities http://cityvis.io/detail.php?id=68

These indications we receive are not neutral: they define our perceived shape of the city.

These data transform how we perceive the city. They transform the wheres, whens, hows we will use the city.

They affect the readability and knowability of the city.

Wrong Directions: this is true also for the directions on how to get to a place.

It is not big news anymore when someone falls into a chasm because they followed Google Map’s directions. They chose to believe the digital directions more than they trusted their own eyes.

For example, we could repeat the experiment using directions instead of points. The image above shows 10k points and the directions from one to the other in Rome. As you can see the algorithms never send us in the spaces between the red lines: are they becoming invisible for us?

source: https://deepdreamgenerator.com/

We are entering a state of constant Algorithmic Hallucination: algorithms influence and distort our perception of reality.

In dealing with Hallucination, the concept of Telepathy comes in handy.

Freud defines telepathy as “when a psychic act of a person is realized in the same psychic forms in another person”, and he considered as an archaic mode of communication between individuals.

source: “Crisis in Psychoanalysis” by Don Ivan Punchatz, https://americangallery.files.wordpress.com/2012/06/crisis-in-psychoanalysis.jpg

Using this definition, telepathy can be seen as an instrument to understand hallucination, as a tool to compare “psychic acts”: using Telepathy, under Freud’s definition, allows me to re-create a psychic act (such as a perception, or an hallucination) in my brain, to compare it with what I experience and know. Otherwise it is impossible to compare psychic experiences: it is impossible to know if one is hallucinating (is my perception right? or is it yours? or is it the algorithm’s?), and to understand if reality is “altered” or “other”.

This can also be applied on a larger scale, to recognize how hallucinations appear socially, in diffused ways, or even how they may be caused to create specific dynamics, balances, peace, violence in communities and society.

source: https://www.theguardian.com/technology/2016/jun/14/zuckerberg-telepathy-facebook-live-video-seinfeld

And this is, in fact, one of the principal reasons according to which subjects like Facebook are so interested in telepathy: Intelligent Agents and Telepathy.

In our BigData world, in which we produce inhuman quantities and qualities of data, the only way to proceed is inhuman: AI, Artificial Intelligence. Our only way in which to process all of these data and information is through software agents.

To do it, computers and software have to understand people and the world. To understand how they behave, operate, think. In general: to understand how they constantly perform psychic acts.

This is why they (and we) need to become trans-species telepaths, able to materialize psychic acts across subjects, also of the non-human type.

Machines have to learn how to recognize, classify, predict, decide, relate. And we do, too, with machines.

To follow these scenario, we have to work at Meta-levels. then MetaMeta. then MetaMetaMeta….

First it’s the production of smart and intelligent HW and SW.

Then it’s the creation of software which, given certain principles, creates intelligent softwares.

It’s neural networks.

Which also starts producing meta-meta: software which starts creating principles to make intelligent softwares, based on certain principles we give them

And MetaMetaMeta. And MetaMetaMetaMeta. And so on.

But this gets really complex really soon, producing Alien Knowledge:

This goes on and at a certain point, researchers like David Weinberger start wondering about the fact that we are absolutely not able anymore to understand how these intelligent agents work.

The HR algorithm of your company will fire you, but you will never be able to know why.

And these are those same agents which, as we saw, influence our perception of the city.

They are the agencies which create the bubbles of information and knowledge which we use to navigate the city everyday: cities, which were already Bubble cities, become Alien Cities.

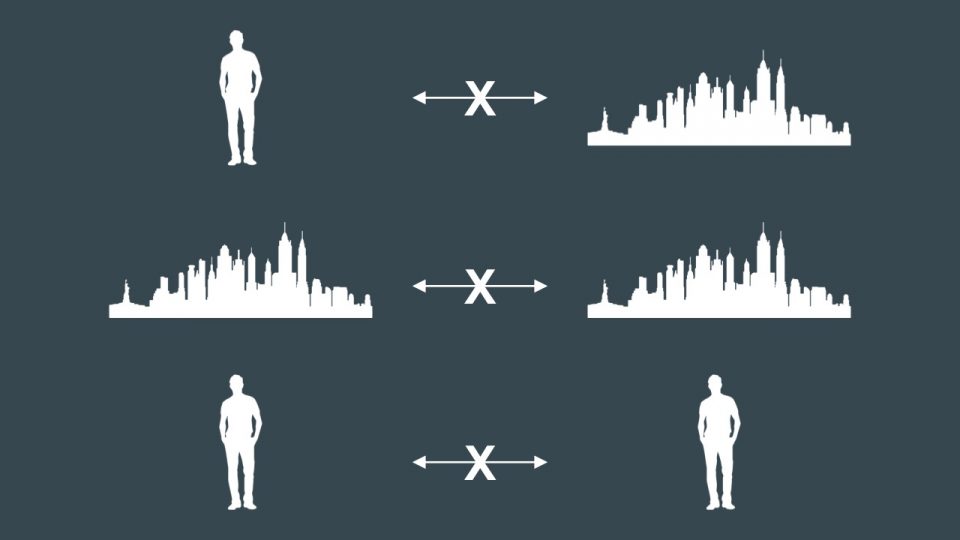

This creates multiple types of separation:

- me from parts of the city, because I don’t perceive them

- between different people, because they don’t cross each other

- between different parts of the city, because they are unreachable, unperceivable, unknowable

This is the concept of the Constrained Cities.

Cities in which people are systematically excluded from certain parts of the city, through data.

Because data and algorithms and software agents minimize the probability that they perceive these parts of the city, and the probability that they go there, stop there, wander off there, etc.

The Constrained cities are already a reality (see the experiments): Algorithms already have their own idea about what parts of the city we should see, and which we should not.

To make this concept more communicative, we have developed a dystopian version, involving obligatory versions of the Constrained Cities, and pain.

How does it work

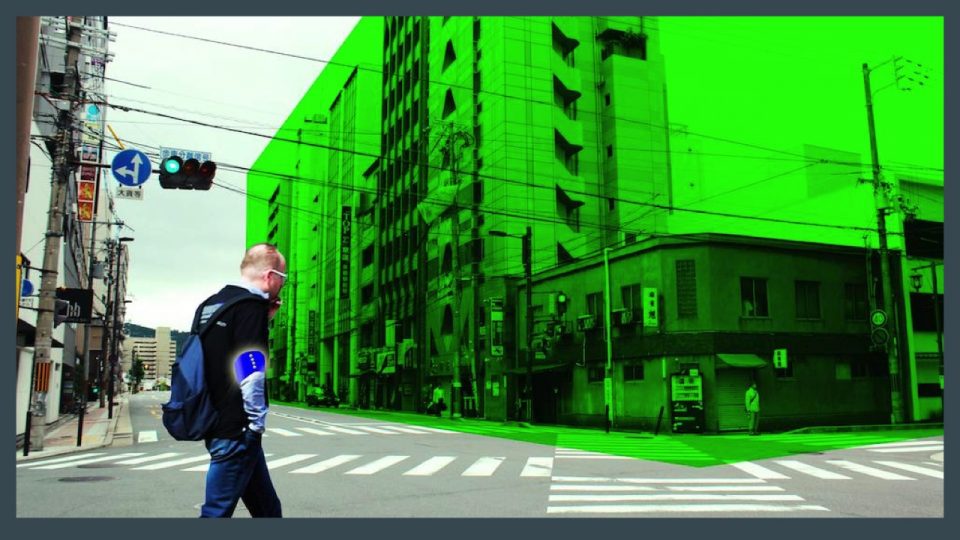

- wearable, pocketable, nomadic, ubiquitous technologies

- generate data all the time

- which is captured by algorithms

- which use it and act as filter on reality

Constrained Cities are dynamic and polyphonic.

They change over time and context. And multiple agencies produce multiple impacts, which can be also conflicting with each other.

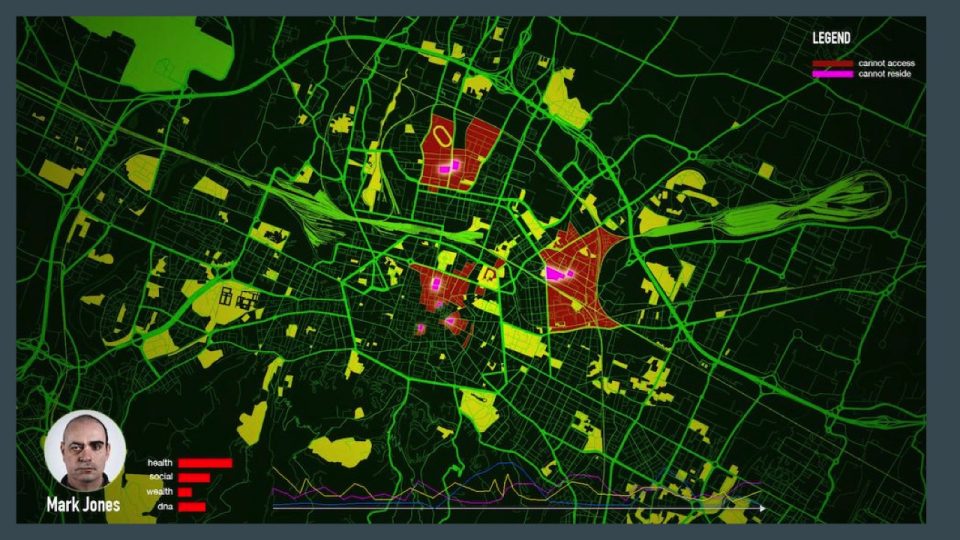

In this dystopian scenario, algorithms have their idea about what part of the city is suitable for each subject, based on revenue, wealth, tastes, behavior, relational networks, health: each person has their own map of the city in which parts are visible or invisible/inaccessible.

Parameters overlay the territory and its navigability: the map literally becomes the territory.

This is enacted in multiple ways:

- from simple and apparently less invasive ones such as control of the availability of information (possible places in answer to queries – a restaurant or library, for example – disappear from the results)

- to the economic ones (the metro ticket to go to a certain location for you costs more than for someone else; or the price for a beer in a certain place for you is higher, or you get a fine for entering a certain zone of the city)

- to the physical ones, featuring impacts onto the body

In this scenario, entire parts of the city are excluded from your access, or they may even disappear from your perception, using one of the mechanisms which we analyzed.

People could be separated from each other: as in the story we presented, the two lovers were separated from each other due to the changing condition of the algorithms.

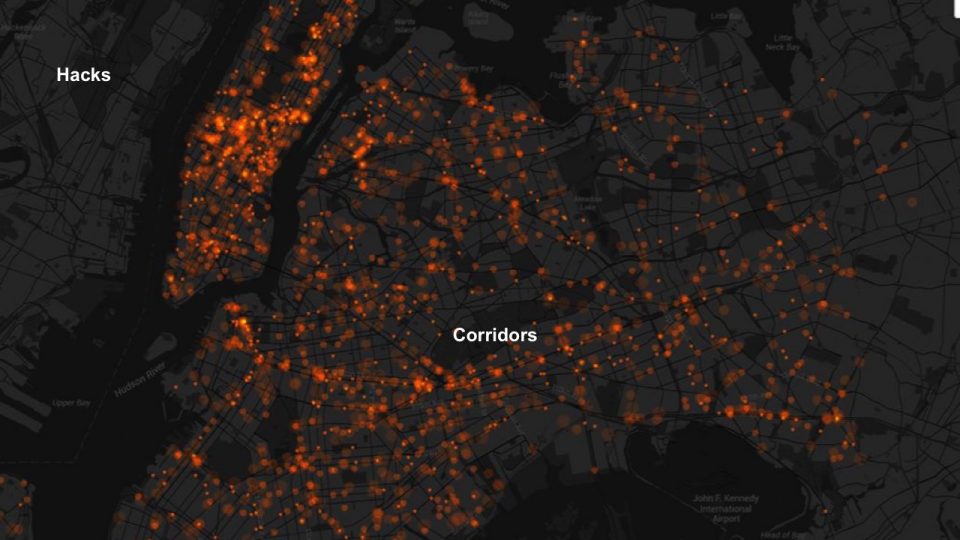

Phenomena of data-driven segregation could be imagined: what would happen if, during a migrant crisis, the algorithms would form a corridor in which migrants would traverse? It would be data-driven segregation.

And, of course, as all systems, the Constrained City would not be perfect nor complete, and hacks would be possible and common, creating a layer of transgression which would allow, for example, unauthorized corridors to form, just like narrated in the story/paper, where a peer-to-peer application was created to anonymously sell unauthorized passages through the Constrained City.

Transgressions and hacks would also come under the forms of failures of the Constrained City, which would allow for even more unauthorized, unforeseen, unpredictable, unexpected behaviors of the city to emerge.

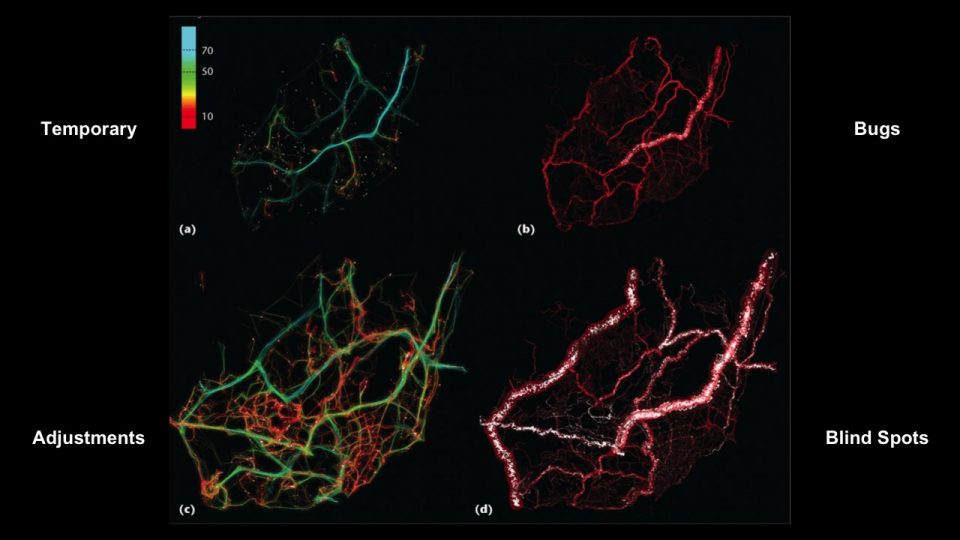

Among these:

- Temporality, according to which the Constrained City has temporary configurations, which mutate together with people’s behavior; some of these changes may bring about inconsistencies, just like in the story, in which the protagonists find themselves in a certain place just as the configuration is changing, making it unaccessible for them while they’re in it;

- Bugs, with malfunctions that may cause all sorts of unexpected behaviors

- Adjustments, in which shifts in configuration could cause disasters while they happen, in the transition

- and Blind Spots, because there will be spots in which the wifi signal is not 100%, or the cameras and sensors are not able to capture data, or the device is broken, etc; here will be raves, all sorts of illegalities, extremes, excesses, opportunities.

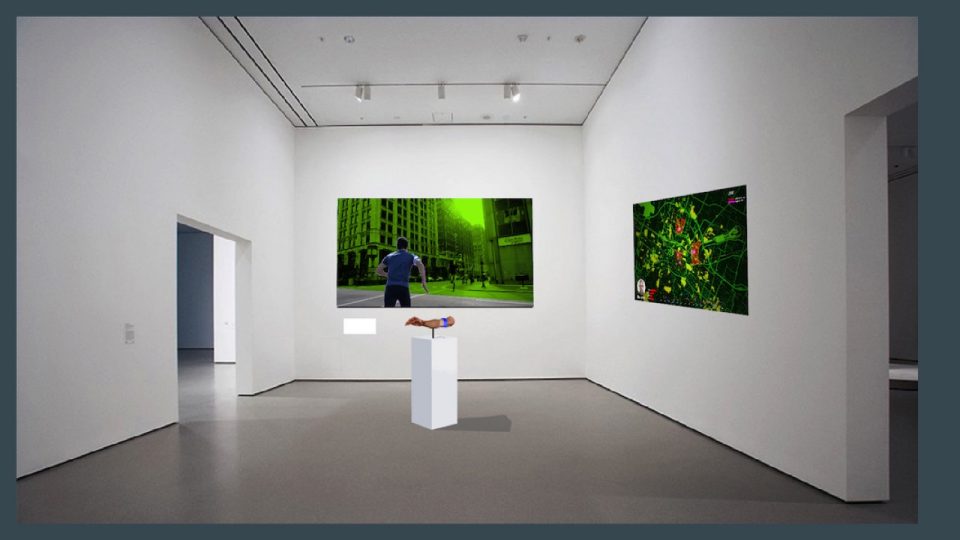

The exhibit of the Constrained Cities will feature the wearable device, which will be tuned on a certain person; the fiction, through a video that shows the story narrated in the paper in cinematic version; a series of personal maps showing, for the current city in which the exhibit is held, the parts of the city which are excluded from access for the authors.

The exhibits will also be walking exhibits. Using multiple copies of the wearable device, visitors will walk in the city. By walking they will experience the tactile experience of the Constrained City, because their wearable will vibrate when they access a place in the city they should not access, according to algorithms.

Conclusions

This short story is a Design Fiction which is part of a Near Future Design, a speculative design technique and methodology which we use in our practice to produce scenarios which are the result of a systemic research on the topics which we deal with (for example, we do it with Nefula).

This Story is based on our research on the Constrained Cities, a dystopian vision of the near future of the cities which we use to investigate potential risks and implications which derive directly from the concepts we all are designing and implementing, as a global community of engineers, designers, technologists, policy makers, entrepreneurs, researchers, practitioners, artists, citizens.

As seen in the references of the story, all that is mentioned in the fiction is something existing now: maybe in prototypal form, but existing, possible, and actively developed. It is a Near Future Design research, in which current research trends are interpreted in terms of evolutive tensions. This story is a “What If?” type of interrogation onto these evidence-based findings.

The future does not have to be scary. As authors we could have invented a completely different story: a happy one, fun, and with a great, wonderful, positive ending. Maybe we will create such a story for our next article.

Here, we wanted to explore an issue which, in our opinion, is in great need for discussion: the ways in which technologies control us, our bodies, intentions and perception.

As we design, develop and achieve wonderful, effective, sustainable services and infrastructures for our cities, we are also locking ourselves up in knowledge, relational and philosophical bubbles. Here, we are facing risks which profoundly affect our ability to positively confront with diversity and with what is unexpected and unforeseen.

What is clear is how these bubbles, on the one hand, reduce (or eliminate all together) the space for transgression in the city. And, on the other hand, they reduce our perceptive space and landscape, up to the point in which, as in the story, concepts, places, relations may disappear, leaving us with a biased, egocentric, consumeristic, controlled, world.

Furthermore, this condition is a condition of remarkable asymmetry in power, or, more precisely, of Biopower. A Biopower which is in data and interfaces, and in their closedness, controlled affordances, opaqueness, lack of interoperability and transparency, and in the constant trade-off between comfort, convenience and availability, and the possibility for critique, complexity and responsibility.

A story – and, thus, research – of this kind may bring on different reactions. Our reaction, as researchers, artists and free (libre) citizens is to dedicate precise efforts to make sure that these issues do not remain a science fiction tale, but wake other people’s desire, imagination and intelligence, to become items for active discussion and agency.

In our opinion and understanding, there are both enormous implications and opportunities in this, whether we approach them from a Design education and practice perspective, or in Engineering theories and practice, in Culture and cultural production, and in all the technologies, research, artworks, conferences, workshop, client commissions, research projects we use and conduct in our practice, and across our daily lives.

![[ AOS ] Art is Open Source](https://www.artisopensource.net/network/artisopensource/wp-content/uploads/2020/03/AOSLogo-01.png)