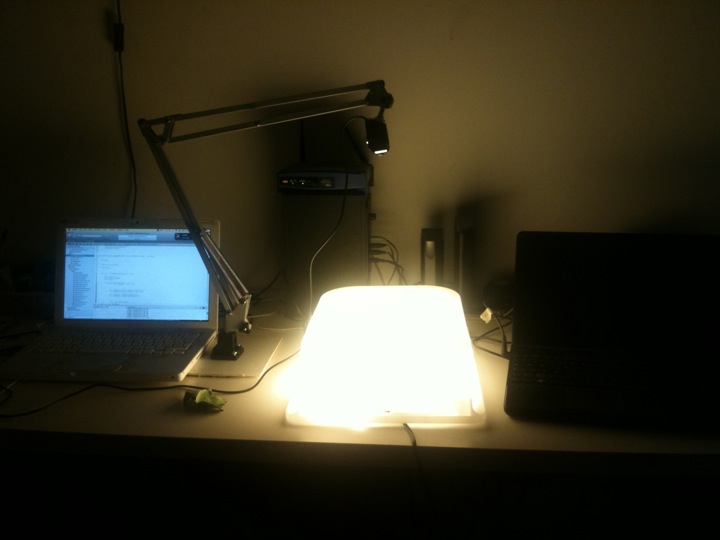

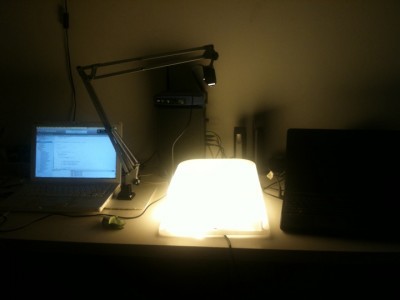

This video shows the setup of the Leaf++ augmented reality performance.

This is the second component of the Leaf++ project, a collaborative environment which uses computer vision and augmented reality to recognize and identify leaves, allowing to use them to share information and media (which can be added to them using a mobile application) and to use them in performative environments.

This video shows the second component. Here, leaves can be used on a lightbox to be recognized by a webcam and computer vision algorithm using the leaf descriptors created using the mobile application.

When leaves are recognized, their contours are used in a sond synthesizer algorithm and can be played live.

As you can see in different parts of the video, fingers moving the leaves around the lightbox are recognized and tracked as blobs, but they don’t generate any sound, which only uses the contours of the recognized leaves.

An interesting experiment we performed (a short video coming up soon) uses basic sine waveforms generated using the amplitudes and moments of the leaves contours (HU invariant moments): when you close your eyes and listen to the sounds generated by different types of leaves, it is possible to recognize them, and this is a really interesting area for research dedicated to people with disabilities who might use these techniques as a way to identify objects around them.

This part of the project is implemented using openframeworks and a video is coming up soon documenting a version for iPhone and Android

more info about Leaf++ on the website:

https://www.artisopensource.net/category/projects/leaf-plusplus/

![[ AOS ] Art is Open Source](https://www.artisopensource.net/network/artisopensource/wp-content/uploads/2020/03/AOSLogo-01.png)